TL;DR

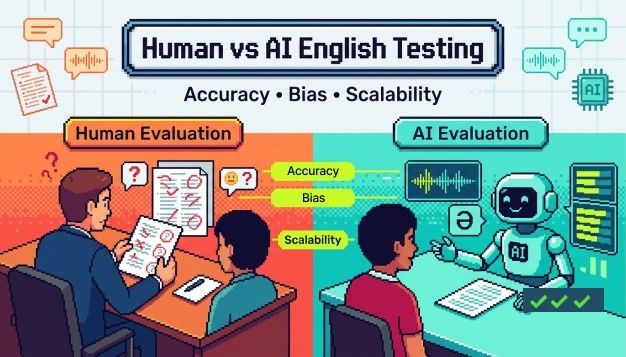

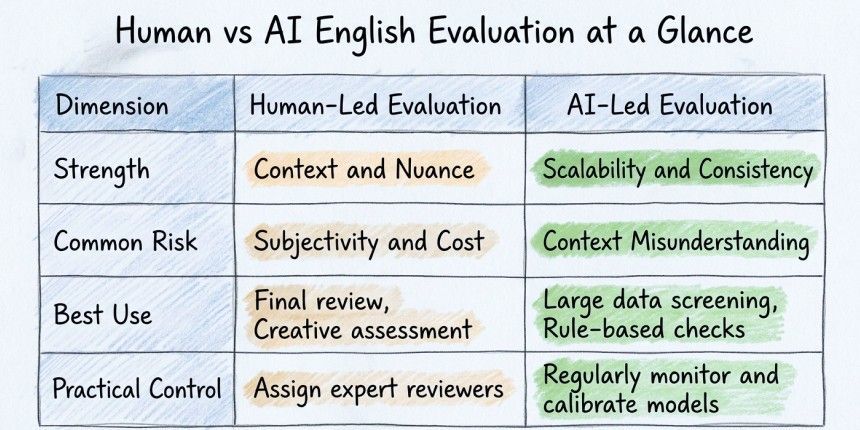

An English proficiency test for hiring only works if it tells you how a candidate will actually perform on the job. Humans are good at reading context, but their scores can shift depending on who's in the room. AI English assessment keeps things consistent at scale. The smartest approach combines both: AI screens first, humans handle the close calls.

What Does ‘Accuracy’ Mean in Hiring-Focused English Evaluation?

Accuracy means: can this person do the job?

A support rep needs to stay calm and clear when a customer is frustrated. A salesperson needs to hold attention and make a point without rambling. An internal team member needs to write updates that don't need three follow-up emails to understand.

A good English assessment test for hiring measures those things. Not grammar rules. Not classroom performance.

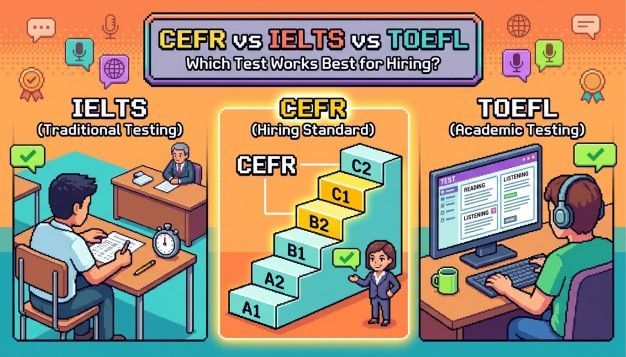

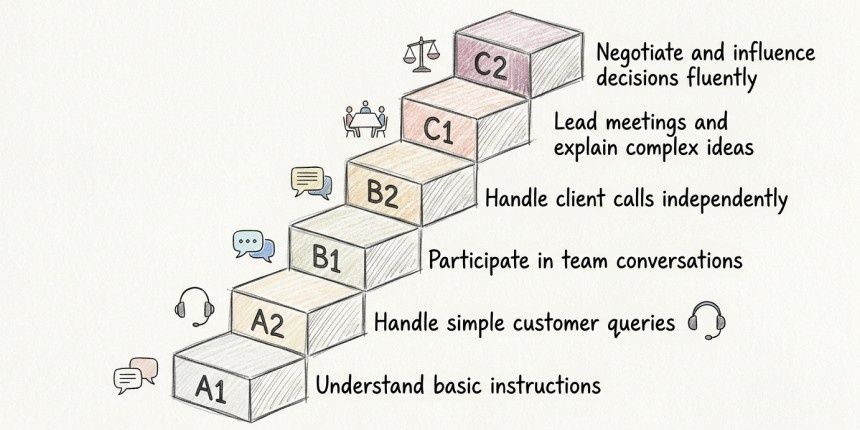

This is where CEFR helps. The Common European Framework of Reference gives you six levels, from A1 to C2. Each level is built around real-world can-do statements. When your English proficiency test for hiring is CEFR-based, your cutoffs stay consistent. It doesn't matter who's interviewing or where the candidate is based.

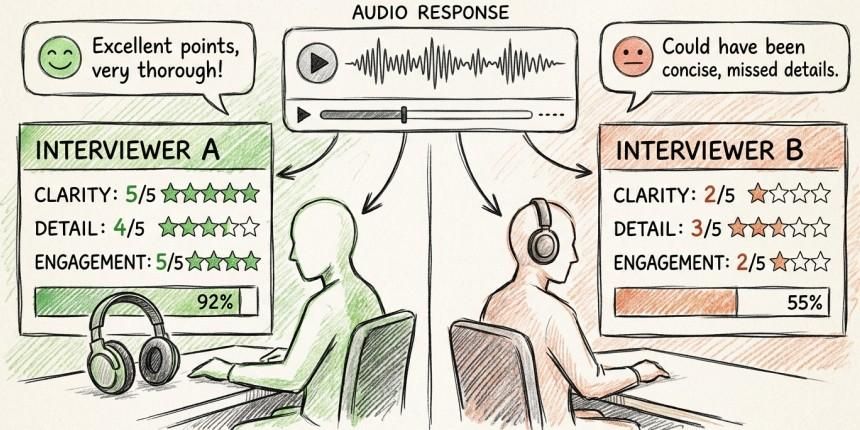

Where Do Human Scores Drift, and Why Does It Matter?

Human evaluators bring real strengths to the table. They notice when a candidate says ‘Sorry, let me rephrase’ and recognise that as a sign of self-awareness. They pick up on tone and professionalism. They can tell if someone asks good, clarifying questions.

But here's the problem: consistency.

Research in Frontiers in Psychology found real differences in how raters score spoken English. Accent familiarity plays a big part. Raters tend to score unfamiliar accents lower, even when the message is perfectly clear. In an English communication test for interview settings, that's a serious problem. Two equally strong candidates can walk away with very different results based purely on who interviewed them.

You can't fix that with one training session. It needs a system-level solution.

How Does AI English Evaluation Scale, and What Breaks at Volume?

The biggest win with AI English assessment is simple: it scores the same way every time. When you're screening hundreds of candidates for the same role, that consistency matters a lot.

But AI has its own weak spots.

Generic prompts can reward people who sound polished but say very little. Poorly designed scenarios miss what the role actually needs. And if the training data skews toward certain accents, the system will too.

The fix is not to avoid AI. It's to use it wisely.

Let AI handle the first screen. Let humans step in for edge cases and final decisions. That split is practical, fair, and easy to audit.

Why Choose Hyring's English Proficiency Test?

There are a lot of AI tools out there. The real question is: does it give you hiring-ready results fast enough to make a difference?

Hyring's English Proficiency Test was built for that. It uses AI scoring tied to CEFR standards and is designed for global teams hiring multilingual talent. Over 5,000 HR teams trust it.

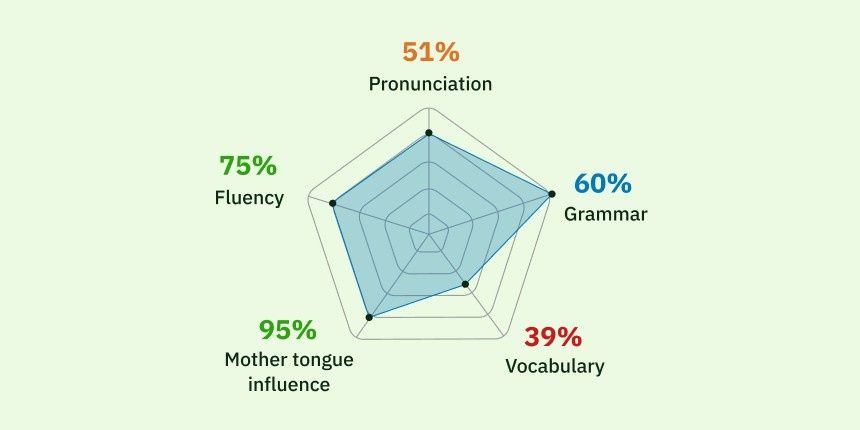

What makes it useful is the rubric. Hyring scores five things that actually connect to job performance: fluency, vocabulary, mother tongue influence, grammar, and pronunciation. The mother tongue influence score is especially helpful. It pushes teams to ask: is this candidate unclear, or just unfamiliar-sounding? That distinction changes a lot of hiring decisions.

The CEFR labels are written in plain work terms too. Hyring describes B2 as the ability to communicate clearly and confidently in most job situations without needing constant support. That kind of description makes it easy to get your whole team on the same page about what ‘good enough’ looks like.

Use Cases You Can Run This Week

Customer-facing hiring sprints: Run the English assessment test for hiring early. Filter to the top band, then use your live interview time only where it counts.

Distributed recruiting teams: Use the CEFR levels and five-factor scores as a shared language across panels. Everyone stops grading English differently.

Role-specific cutoffs: Set B1 for internal roles and B2 or above for client-facing ones. Review near-cutoff candidates instead of auto-rejecting them. The English proficiency test for hiring becomes a tool to guide decisions, not just cut people out.

Hyring offers test durations of 4, 10, and 15 minutes depending on your plan. Higher tiers include accent insights, cognitive signals, and weighted scoring criteria. All reports come with an AI summary and full transcripts.

If your current English check still comes down to gut feel, Hyring's EPT is a clean first step. Start with one role, one cutoff, and a small review lane.

How Do You Reduce Bias in Human vs AI Scoring?

Bias control only works when you can measure it.

Keep your dimension scores visible. Use prompts that match the actual job. Align your human interviewers to the same CEFR language used in the AI system. And track pass rates by role, location, and candidate group. If you see a big gap somewhere, dig into it.

Here's a quick checklist:

- Keep all dimension scores visible and on record

- Use job-relevant prompts and sample tasks where possible

- Train human interviewers with the same rubric language

- Review near-cutoff decisions every month

- Build a human override lane and require a short written reason for each override

Hyring's own writing flags data bias as a real risk in AI-driven testing. They frame the goal as balancing speed with fairness. That's the right way to think about it. No tool is neutral. The question is whether yours is being watched.

Key Takeaways

- Accuracy in an English proficiency test for hiring means job-ready communication, not perfect grammar.

- Human raters catch nuance but are vulnerable to drift, especially around accent familiarity.

- AI English assessment tools deliver a consistent baseline at scale but need monitoring and a human review step.

- Hyring's EPT stands out for its CEFR anchoring, clear five-factor rubric, and flexible test lengths.

Frequently Asked Questions (FAQs)

1. Is CEFR actually useful for hiring thresholds?

Yes. The A1 to C2 scale uses real-world descriptions that translate directly into job performance. It gives recruiters and hiring managers a shared language for what proficient enough means. That cuts down on disagreements and keeps your process consistent.

2. When should a human override an AI score?

When the candidate is close to the cutoff, when the role depends heavily on communication, or when other signals strongly contradict the language score.

3. What's the fastest way to get this running?

Pick one role. Set one CEFR cutoff. Run the English communication test for interview screening as the first gate. Review near-cutoff candidates by hand instead of auto-rejecting them.

4. What's the biggest fairness risk to watch for?

Accent-related skew. Track your pass rates by candidate group and audit outcomes regularly. Don't assume any system, human or AI, is fair without checking the data.