Hiring has a trust problem. It's not new, but in 2026, it looks very different from what it used to be.

A decade ago, the biggest integrity risk in an interview was a candidate exaggerating their resume. Maybe they inflated a job title, or they stretched a timeline. It can give misleading information about the candidate, but it was manageable.

Today, the risks are structural. Candidates can generate expert-level answers using AI tools in seconds. They can use the help of someone else to sit next to them on a video call. The stakes have changed. And so the systems protecting interview integrity had to change with them.

Integrity Is No Longer Just an HR Concern

Here's something that has shifted in the last two years. Interview integrity used to live entirely within the recruiting function. It was the hiring manager's job to spot a red flag. The recruiter's instinct to catch inconsistencies. The reference check to confirm the story.

72% of recruiters report encountering AI-generated fake resumes or credentials.45% of developers admit to using AI during coding assessments. 31% of hiring managers have interviewed someone who later turned out to be using a false identity.

The conversation has moved from how to spot a liar to how to design a process where dishonesty has nowhere to hide. That's a fundamentally different starting point. And it's where AI comes in.

The Real Problem Is Not Bad Candidates

Most conversations about interview integrity focus on fraud. These are real issues, and they deserve attention. But they're only part of the picture.

The deeper problem is signal quality. Every interview is supposed to generate a signal about whether someone can do the job. But traditional interviews are surprisingly bad at this.

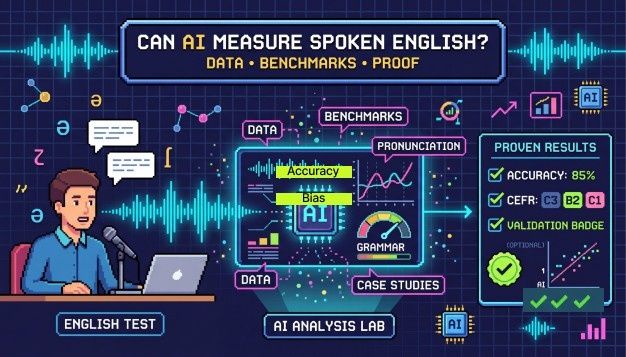

Now layer generative AI on top of that. A candidate uses ChatGPT to craft a perfect answer to a behavioral question. The interviewer is impressed. They score the candidate highly. But what signal did that interview actually produce? It measured the candidate's ability to prompt an AI tool. Not their ability to do the work.

This is the integrity crisis that doesn't get enough attention. It's not always about deception. Sometimes it's about a broken feedback loop where the interview measures the wrong thing entirely. AI-powered hiring platforms are starting to fix this by analyzing not just what a candidate says, but how they arrive at their answer. Response timing, reasoning depth, consistency across multiple questions. These are the signals that actually correlate with capability.

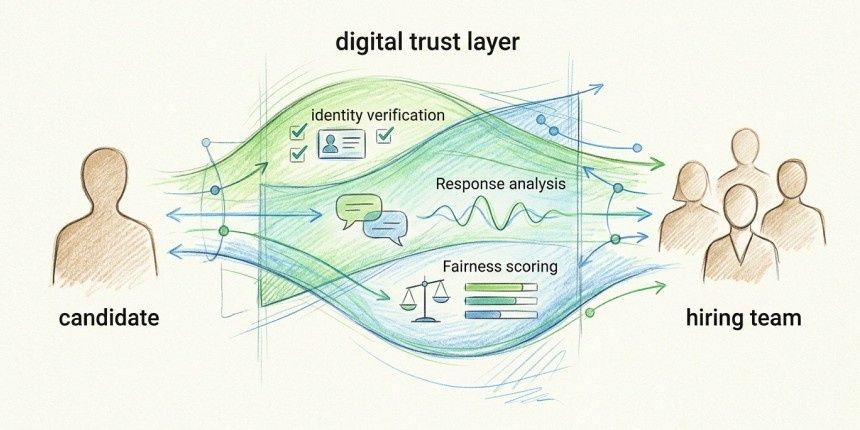

Identity Is the Foundation

You can't evaluate someone's skills if you do not know who you're talking to. That sentence sounds obvious. But the scale of identity fraud in hiring has forced it back to the center of the conversation.

Modern AI handles this through facial recognition, liveness detection, and browser activities. Liveness checks confirm they do not get any external help and all this happens in seconds. It's passive, and it eliminates an entire category of fraud.

Watching Behavior Without Creating a Police State

This is where a lot of companies get it wrong. They hear behavioral monitoring and immediately picture invasive proctoring software that tracks every eye movement and keystroke. The kind that makes candidates feel like criminal suspects. That approach backfires. It creates anxiety and drives away strong candidates. It generates many false positives, and hiring teams stop trusting the alerts.

The shift in 2026 is toward contextual monitoring. AI systems that understand behavior in context rather than flagging isolated actions. A candidate glancing to the side for a moment is normal. A candidate whose eyes consistently track to a specific off-screen position while their answer quality suddenly improves is a pattern worth noting.

The same applies to audio, and AI can now detect with increasing accuracy.

The keyword is proportionality. Good integrity tools observe enough to catch genuine red flags without making the process feel hostile. That balance is hard to get right. But it's essential.

Consistency As An Integrity Measure

There's one dimension of integrity that rarely makes headlines but has an enormous impact on hiring quality: consistency.

When two interviewers evaluate the same candidate and reach wildly different conclusions, that's an integrity failure. When one candidate gets a tough technical deep-dive, and another gets a casual chat for the same role, that's an integrity failure. When unconscious bias around someone's accent or appearance influences a score, that's an integrity failure too.

AI addresses this by enforcing structure at scale. Same questions, same rubrics, and same evaluation criteria, every time. Across every interviewer and every candidate. No human process can maintain that level of consistency across hundreds of interviews a month, but AI can.

Consistency doesn't just make the process fairer. It makes the data more useful. When every candidate is measured against the same framework, hiring teams can make real comparisons. They can spot patterns. They can identify what actually predicts success in a role, instead of relying on whoever "felt" like the best fit.

How Hyring Approaches This

At Hyring, we think about integrity as something that should be invisible to the candidate and obvious to the hiring team.

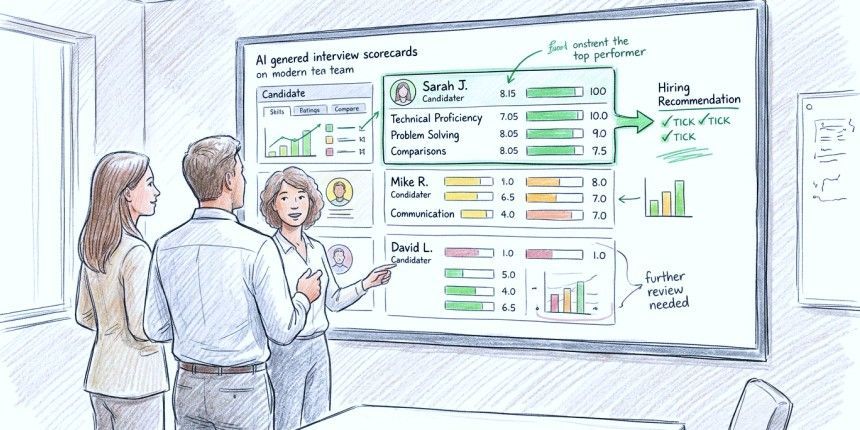

Our AI interview platform handles monitoring and structured evaluation across the entire interview lifecycle. Behavioral signals are analyzed during the conversation, and afterward, hiring teams receive objective, data-backed scorecards instead of subjective notes.

We built it this way because we believe integrity should not feel like friction. Candidates should walk away from a Hyring interview feeling like they were given a fair shot. Hiring teams should walk away knowing the signal they received is one they can trust. That is the standard we hold ourselves to.

The Road Ahead

Regulation is moving too. The EU AI Act already governs how AI can be used in employment contexts. Similar frameworks are being discussed in the United States. Companies that build ethical, transparent AI into their hiring now will have an advantage when those rules formalize.

But regulation aside, there's a simpler reason to care about this. Every bad hire costs money. Every good candidate lost to a broken process costs an opportunity. Interview integrity isn't an abstract principle. It's a business outcome. And in 2026, AI is the most effective tool we have to protect it.

Frequently Asked Questions

1. What makes AI-driven integrity different from traditional proctoring?

Traditional proctoring tools flagged surface-level actions like eye movement or tab switching. AI-driven integrity systems understand context. They analyze patterns over the course of an entire interview, distinguishing between normal human behavior and genuine red flags. The result is fewer false positives and a much better candidate experience.

2. Can AI tell if a candidate is using ChatGPT to answer questions?

Detection is improving rapidly. AI platforms now analyze response latency, linguistic consistency, and reasoning patterns to identify answers that may be externally generated. No system is perfect yet. But the gap between AI-assisted answers and organic responses is becoming easier to spot as the technology matures.

3. How does Hyring handle candidate privacy during integrity checks?

Integrity measures at Hyring are designed to run in the background. Behavioral analysis focuses on patterns, not individual actions. All data handling follows responsible AI practices. The goal is to protect fairness without compromising the candidate's experience or dignity.

4. Is structured interviewing really that much better than unstructured?

Structure removes the randomness that makes unstructured interviews unreliable. AI enforces that structure at scale, which means every candidate gets the same fair evaluation regardless of who interviews them.

5. What happens when regulations around AI in hiring get stricter?

Companies that already use transparent, auditable AI systems will be well-positioned. The direction of regulation, both in the EU and the U.S., favors explainability and fairness. Platforms built on those principles today won't need to overhaul their approach when compliance requirements catch up.