TL;DR

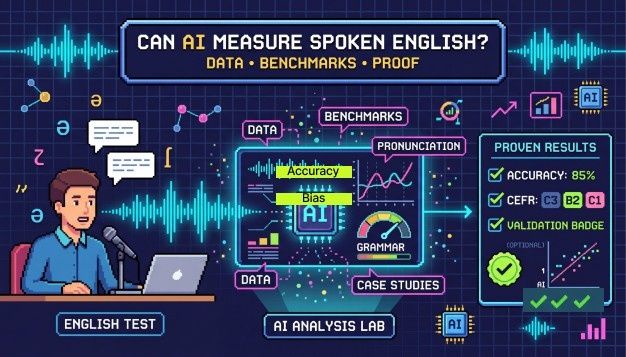

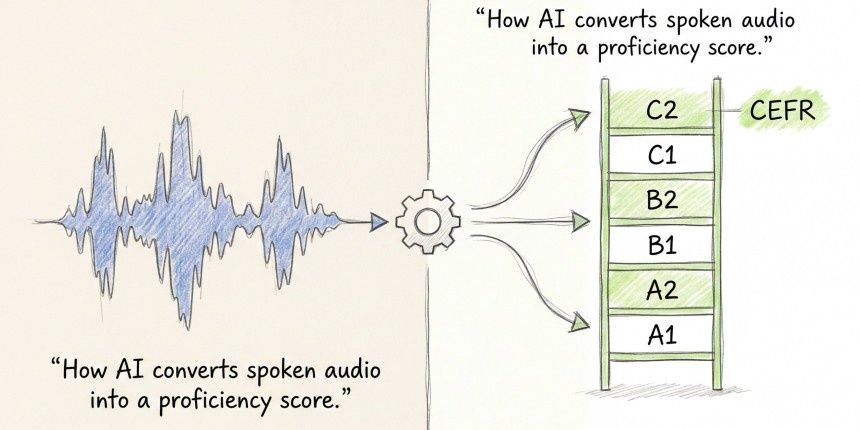

AI can measure English well, but it depends on how it is designed, the accents candidates have, and the content they are tested on. Modern AI tests check a person's fluency, grammar, pronunciation, and vocabulary, then give a score based on the CEFR system. The technology is ready, but there are other important issues to consider when evaluating a candidate's English for the workplace.

Companies hiring overseas have been using AI English Proficiency tests for a while. This isn't new, but we need to see if these results are valid when hiring someone from places like Lagos, Bangalore, or São Paulo. Do these results show real skills? Mostly, yes.

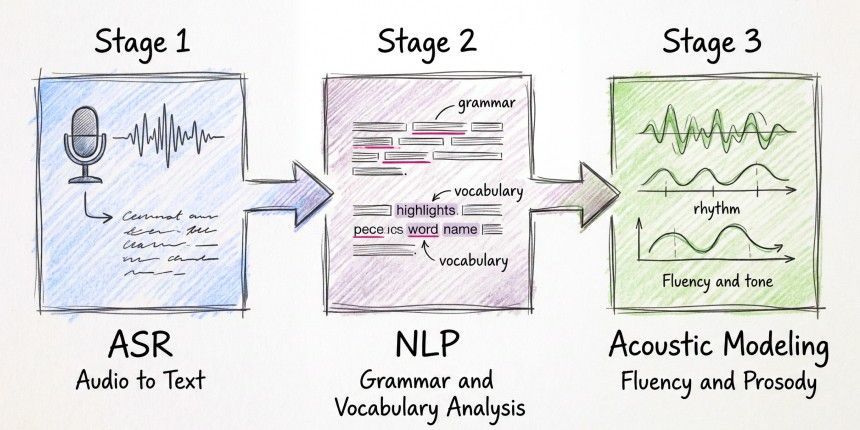

How AI Actually Evaluates Spoken English

The process starts with Automatic Speech Recognition (ASR), which turns speech into text, capturing words, pauses, and tonality. Then, Natural Language Processing (NLP) checks grammar, vocabulary, and the candidate's understanding of the language. Lastly, acoustic modeling measures fluency, including speech rhythm and tone.

What makes an English test for hiring different from just transcription software? The test gives a clear result.

One useful method is using structured prompts. The test-taker answers a question within a set time, making it easier for recruiters to compare scores. While natural conversation is better, it’s hard to compare scores among many students, so most tests use guided activities.

What the Benchmarks Show and What They Don't

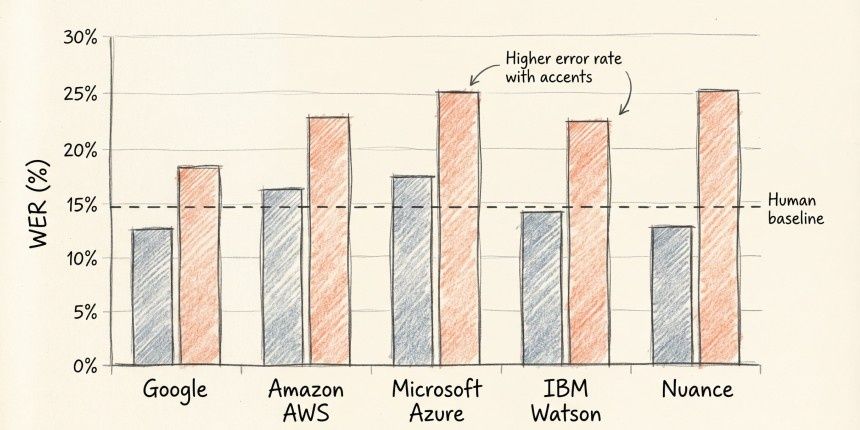

When testing ASR, the main measure is word error rate (WER), which counts how many mistakes the algorithm makes in transcribing speech. A lower number is always considered better. Microsoft’s 2016 research showed their ASR could transcribe conversations with a 5.9% WER, matching human transcribers. This is impressive, but WER only shows how well the algorithm recognizes words. It doesn’t show whether someone can help a customer or make a proposal. Real workplace English skills include these abilities, not just speech recognition.

The more relevant benchmark for hiring is how closely AI scores line up with A more important factor for hiring is how AI scores match human scores. ETS, which created the SpeechRater for the TOEFL exam, found a correlation of about 0.7 to 0.8 between AI and human scores. Human raters also show similar results when scoring the same answer. AI is close to human performance but does not surpass it.

Real-World Deployments: What Case Studies Reveal

In BPO and customer service hiring, many use automated spoken English tests. The main benefit is consistency. A human judging thirty applicants in one day may not be fair to all. An automated test will always score the same way.

Where AI Language Testing Has Real Limits

One big issue is background noise. If a test-taker is in a noisy place, it can lower their score, even if their English is good. This can be fixed with a better test design.

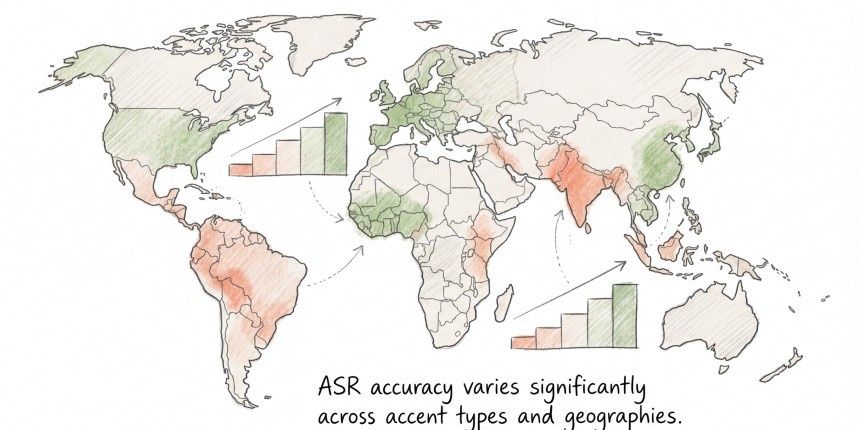

Another problem is accents. A study found that some speech recognition systems make more mistakes with African American Vernacular English than with white English. This means a person with a South Indian, Nigerian, or Brazilian accent might score poorly, even if they speak clearly, because of their accents.

Lastly, the test might not always measure what it claims. It could incorrectly mark a speaker for grammatical mistakes due to regional phrases or errors in transcription. Humans can easily spot these issues, but the algorithm needs to be smart enough to handle them.

This doesn’t mean we shouldn’t use automated tests. It just shows that technology’s accuracy is very important.

Why CEFR Scoring Makes AI Results Usable

A score of 74/100 doesn’t tell much. A CEFR level does. A B1 means a candidate can handle everyday work talks but may make mistakes. A C1 means they can discuss complex topics easily. This helps when thinking about who will meet clients.

CEFR is a global standard for language skills, recognized by schools and businesses everywhere. A CEFR level means the same in Singapore, Germany, and Canada, which is helpful for international hiring.

From an accountability view, saying, "This candidate got a C1 score on a valid test" can be checked. But saying, "They sounded fluent in the interview," cannot be verified.

How Hyring's English Proficiency Test Fits Into Hiring

Hyring’s English Proficiency Test checks candidates on five areas: fluency, vocabulary, mother tongue influence, grammar, and pronunciation. These are rated from A1 to C2 levels. The mother tongue influence is important. It looks at how a candidate's first language affects their sentence structure, word choice, and speech pace.

Hyring’s system is part of the hiring process, so it tests spoken language skills before recruiters see candidates' profiles. This is important when it comes to global hiring at volume, as human grading can become inconsistent over time. Fatigue and bias can affect results quickly.

Think of it as a filter. Good results should prompt action, but a conversation is still needed.

Key Takeaways

- AI can score English speaking skills as well as humans in formal tasks, but struggles with natural speech.

- Accents and sound quality greatly affect AI results in hiring.

- CEFR levels are important for understanding AI scores. A raw score alone is not helpful; CEFR levels provide context.

FAQs

1. Can an AI English proficiency test replace a human interview?

No, that is not the goal. An AI test helps make the first selection faster and more consistent, but human interaction is still needed in final interviews.

2. How accurate is AI at scoring spoken English compared to human raters?

Research shows that AI and human scores are similar, with a correlation of 0.7 to 0.8. AI is good at measuring fluency, pronunciation, and grammar, but struggles with longer speech.

3. How does AI handle non-native English speakers fairly?

The fairness depends on how the AI is trained. A diverse range of accents helps it assess speakers better. Tests that focus on communication skills rather than sounding like a native speaker are fairer for international hiring.

4. What is CEFR, and why does it matter for an AI language test?

CEFR stands for the Common European Framework of References for Languages. It has six levels from A1 to C2 and is recognized worldwide, making results easy to understand.

5. Is an automated spoken English assessment suitable for all hiring contexts?

It works best when speaking fluently in English is important for the job, like in customer service or teamwork. If writing skills are more important, the testing method should change.